Intel Reclaims AI Relevance: A Deep Dive into the CPU Comeback

The Shifting Sands of AI: From GPU Dominance to CPU Resurgence

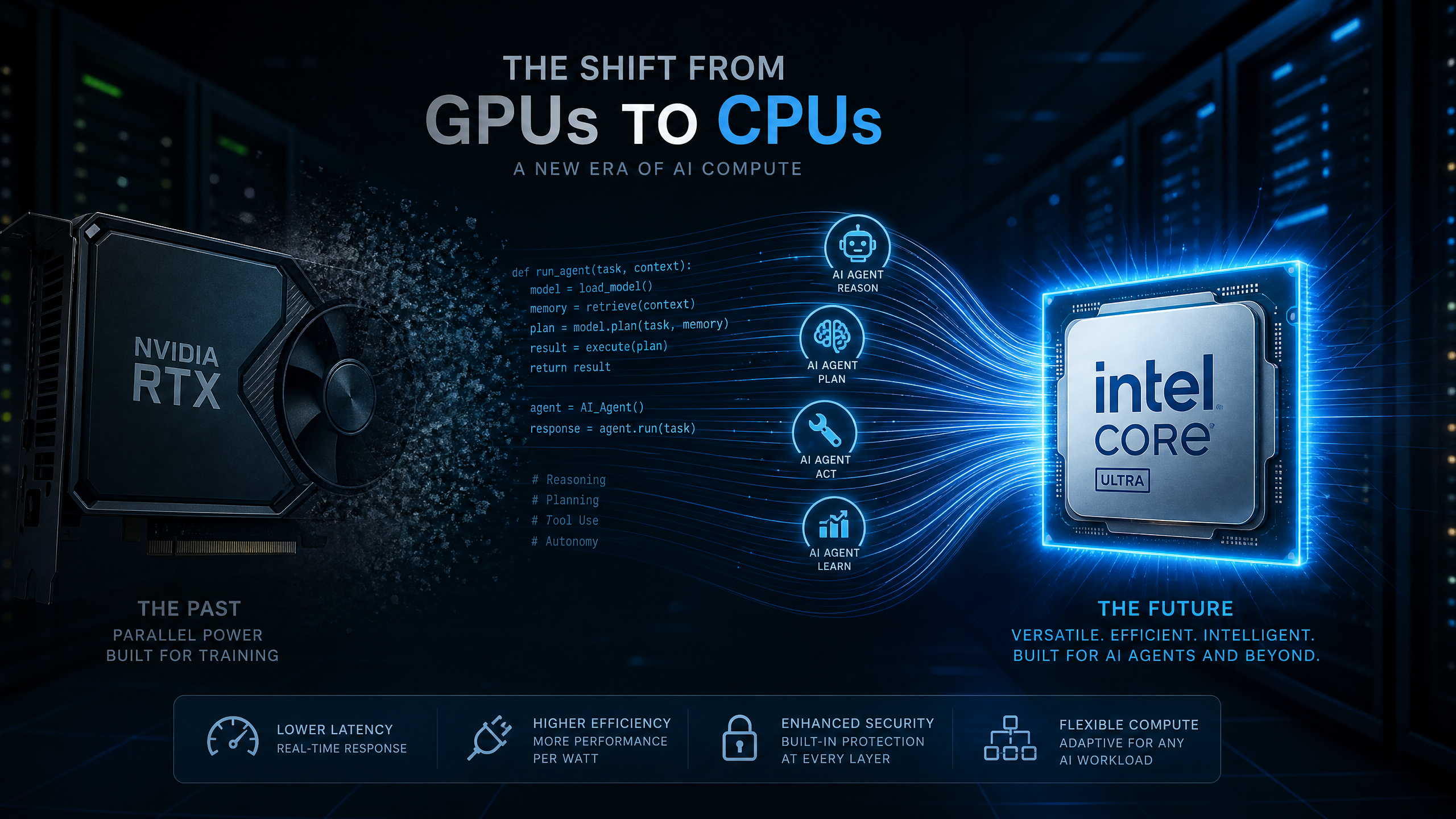

For years, the narrative surrounding artificial intelligence has been dominated by the prowess of Graphics Processing Units (GPUs). Nvidia, in particular, has ridden this wave to unprecedented heights, with its GPUs becoming the de facto standard for training large language models and complex AI algorithms. However, a quiet but significant shift is underway in the semiconductor industry, one that is bringing Central Processing Units (CPUs) back into the spotlight. Intel, long perceived as a laggard in the GPU-centric AI race, is now staging a remarkable comeback, driven by the burgeoning demand for AI inference and agentic AI systems.

This resurgence is not merely a fleeting trend; it represents a fundamental re-evaluation of AI infrastructure. As AI applications move beyond the intensive training phase and into real-world enterprise deployments, the requirements for processing power are evolving. The focus is shifting towards inference workloads – the real-time application of trained AI models to new data – and the deployment of agentic AI systems, which are designed to operate autonomously and interact with their environments. These new demands are playing directly into Intel's strengths, making CPUs mission-critical once again.

Intel's Q1 2026 Earnings: A Turning Point

Intel's latest earnings report for Q1 2026 served as a powerful testament to this changing landscape. The company significantly outperformed Wall Street expectations, delivering a major quarterly beat and sharply improved guidance. This impressive performance was primarily fueled by stronger-than-anticipated demand in its Data Centre and AI business, coupled with resilient PC sales and accelerating foundry momentum [1].

The Data Centre and AI division emerged as the undisputed star, benefiting from a surge in enterprise demand for Xeon CPUs. This demand is directly linked to the increasing adoption of AI infrastructure that prioritizes inference and deployment over pure model training. The company also reported healthier margins and improved operational cash flow, reinforcing investor confidence in Intel's strategic turnaround. While GAAP profitability was affected by restructuring and impairment charges, the market largely focused on Intel's operational momentum and its growing influence in the next phase of AI infrastructure.

Key Financial Highlights (Q1 2026)

| Metric | Performance | Impact |

|---|

| Revenue | Significantly outperformed expectations | Driven by Data Centre & AI, PC sales, and foundry momentum |

| Adjusted Earnings | Major quarterly beat | Signals improved operational efficiency and market positioning |

| Data Centre & AI Growth | Stronger-than-anticipated demand | Reflects increasing adoption of AI inference and deployment |

| Margins | Improved | Indicates better cost management and pricing power |

| Operational Cash Flow | Improved | Provides capital for continued investment and innovation |

| Guidance (Q2) | Well above analyst forecasts | Reinforces confidence in sustained growth and recovery |

The Rise of AI Inference and Agentic Systems

Intel's strong quarter validates a crucial market shift: AI inference is rapidly becoming the next major semiconductor battleground. While GPUs remain indispensable for the computationally intensive task of training large language models, CPUs are proving increasingly essential for inference workloads. These workloads involve the real-time processing that underpins AI agents, enterprise automation, and a wide array of user-facing AI applications.

According to Intel CEO Lip-Bu Tan, the next wave of AI is characterized by a move from foundational models to inference and agentic systems. This transition is materially increasing demand for Intel's Xeon CPUs, advanced packaging solutions, and foundry capabilities [2]. The distinction between training and inference is critical here. Training an AI model involves feeding it vast amounts of data to learn patterns and make predictions. Inference, on the other hand, is the process of using that trained model to make predictions or decisions on new, unseen data. As businesses deploy thousands of AI agents across various workflows, the demand for CPUs can rise substantially due to the higher CPU-to-GPU ratios typically found in production environments.

Real-World Examples of AI Inference and Agentic AI

- Healthcare Diagnostics: AI models trained to detect diseases from medical images (e.g., X-rays, MRIs) require powerful CPUs for rapid inference at the point of care, enabling quick and accurate diagnoses. This reduces human error and speeds up critical decision-making. For instance, an AI agent could analyze a patient's symptoms and medical history in real-time to suggest potential diagnoses and treatment plans to a physician.

- Autonomous Vehicles: Self-driving cars rely heavily on real-time inference to process sensor data, identify objects, predict movements, and make instantaneous driving decisions. The low-latency requirements of such systems necessitate efficient CPU performance.

- Financial Fraud Detection: AI systems in finance continuously analyze transaction data to identify fraudulent activities. This involves high-volume, low-latency inference to flag suspicious transactions as they occur, preventing significant financial losses.

- Personalized Customer Service: AI-powered chatbots and virtual assistants use inference to understand user queries, retrieve relevant information, and provide personalized responses in real-time, enhancing customer experience and reducing operational costs.

- Industrial Automation: In smart factories, AI agents monitor production lines, predict equipment failures, and optimize manufacturing processes. This requires continuous inference on sensor data to ensure smooth and efficient operations.

These examples highlight the practical implications of the shift towards inference. As AI becomes more integrated into daily operations across industries, the need for efficient and scalable CPU-based inference solutions will only grow.

Intel's Multi-Engine Turnaround Strategy

Intel's impressive rebound is not solely attributable to the rising demand for AI inference. The company has been executing a multi-faceted turnaround strategy under CEO Lip-Bu Tan, focusing on several key areas:

Xeon Server Momentum

Demand for Intel's Xeon server CPUs in AI data centers has significantly exceeded expectations. Management has highlighted supply tightness, indicating robust market adoption and a strong competitive position. This momentum is crucial as data centers form the backbone of modern AI infrastructure.

Intel Foundry Expansion

Intel's foundry business, which involves manufacturing chips for other companies, has also delivered meaningful growth. Strategic wins with major partners like Tesla and Google underscore the increasing commercial credibility of Intel's manufacturing ambitions. This diversification reduces reliance on internal chip production and opens new revenue streams.

Process Technology Leadership

Intel's progress in advanced process technologies is central to its strategic reset. By investing heavily in cutting-edge manufacturing processes, Intel aims to regain its leadership in chip production, giving it a stronger competitive position for both its own products and its foundry services. This technological edge is vital for producing the high-performance, energy-efficient CPUs required for advanced AI workloads.

Risks and Future Outlook

While Intel's turnaround is gaining serious momentum, investors should remain cognizant of potential risks. The sharp rerating of Intel's stock has significantly raised expectations, placing greater pressure on the company to sustain consistent execution. Management has acknowledged that demand currently exceeds supply, which, while bullish in the near term, also introduces potential capacity and operational challenges.

However, the more significant test will be proving that inference-led CPU demand is not merely a temporary cycle but a durable structural shift that can support Intel's longer-term AI growth story. The company's ability to scale its manufacturing capacity and continue innovating in CPU architecture will be critical to maintaining its competitive edge against rivals like AMD and specialized AI chip developers.

Conclusion: Intel as a Strategic AI Infrastructure Player

Intel's latest quarter may well be remembered as a pivotal moment, marking the market's recognition that AI infrastructure extends far beyond GPUs. As AI inference, enterprise deployment, and agentic AI continue to scale globally, Intel is strategically positioned to capture a meaningful share of the next phase of AI monetization. This transforms Intel's narrative from that of a legacy chipmaker struggling to keep pace, to a strategic AI infrastructure player poised for sustained growth.

The company's renewed focus on CPUs for inference, coupled with its advancements in foundry services and process technology, positions it as a critical enabler of the widespread adoption of AI. The future of AI is not just about training massive models; it's about deploying them effectively and efficiently in every corner of the enterprise, and in this evolving landscape, Intel's CPUs are proving to be indispensable.

References

- Intel Reports First-Quarter 2026 Financial Results - Intel Investor Relations

- Intel’s data center and AI revenue jumps 22% as quarterly sales top Wall Street estimates - Quartz