Introduction: The New Frontier of Cyber Defense with AI

In an era where digital threats are constantly evolving, the need for robust and proactive cybersecurity measures has never been more critical. Traditional defense mechanisms, while essential, often struggle to keep pace with the sophistication and speed of modern cyberattacks. This escalating challenge has paved the way for artificial intelligence (AI) to emerge as a transformative force in cybersecurity. OpenAI, a leader in AI research and development, has stepped into this arena with its latest innovation: GPT-5.5-Cyber. This advanced AI model is specifically engineered to bolster cyber defenses, offering unprecedented capabilities in identifying system vulnerabilities and neutralizing threats with remarkable speed.

The introduction of GPT-5.5-Cyber marks a significant milestone in the application of AI for societal benefit. It represents a strategic pivot towards leveraging cutting-edge language models not just for creative or analytical tasks, but for critical infrastructure protection. This article delves into the intricacies of GPT-5.5-Cyber, exploring its functionalities, the strategic implications of its limited preview, the broader governmental and global attention it has garnered, and the delicate balance OpenAI is striving to maintain between rapid innovation and stringent safety protocols.

A Limited Preview: Securing Critical Infrastructure

GPT-5.5-Cyber is currently accessible through a “limited preview,” a strategic deployment choice by OpenAI. This exclusive access is granted to a select group of cybersecurity professionals, primarily those safeguarding critical infrastructure. The rationale behind this controlled rollout is multifaceted: it allows for rigorous testing in real-world, high-stakes environments while mitigating potential risks associated with widespread deployment of such a powerful tool. OpenAI’s objective is clear: to support specialized security workflows that are vital for protecting extensive digital ecosystems from sophisticated cyber threats.

Real-World Applications and Industry Insights

Participants in this limited preview are leveraging GPT-5.5-Cyber to perform critical functions such as:

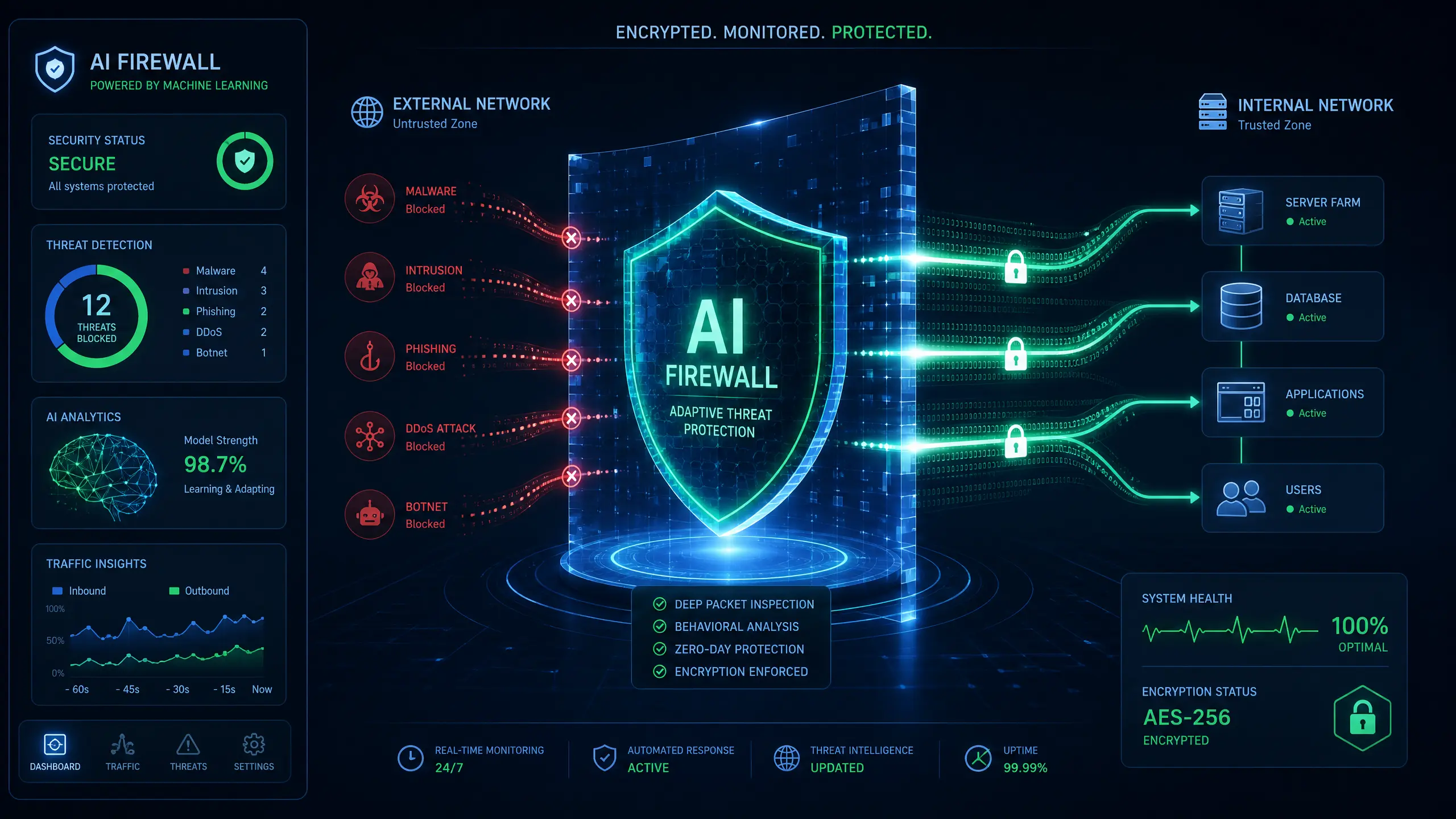

- Vulnerability Identification and Patching: The AI model can rapidly scan complex codebases and network configurations to pinpoint weaknesses that human analysts might overlook. For instance, in a recent pilot, a major financial institution used GPT-5.5-Cyber to identify several zero-day vulnerabilities in its legacy systems, leading to preemptive patching that averted potential breaches. This capability is particularly crucial for organizations managing vast and intricate IT infrastructures, where manual audits are time-consuming and prone to human error.

- Malware Behavior Analysis: Understanding the modus operandi of malware is paramount for effective defense. GPT-5.5-Cyber can analyze malware samples, predict their propagation patterns, and suggest countermeasures. An industry insight from a leading cybersecurity firm revealed that the model could dissect polymorphic malware strains, which constantly change their code to evade detection, significantly faster than traditional signature-based antivirus systems. This speed is a game-changer in preventing widespread infections.

Statistics and Data Points

Recent reports indicate a significant increase in cyberattacks targeting critical infrastructure. According to a study by the Cybersecurity and Infrastructure Security Agency (CISA), attacks on critical sectors rose by 20% in the past year alone [1]. The average cost of a data breach has also surged, reaching an estimated $4.45 million globally in 2023 [2]. These statistics underscore the urgent need for advanced tools like GPT-5.5-Cyber, which can offer a proactive defense against an ever-growing threat landscape. Early feedback from the limited preview suggests a 30% reduction in the time taken to identify critical vulnerabilities and a 25% improvement in malware analysis efficiency.

Government and Global Attention: A Regulatory Tightrope

The emergence of advanced AI models like GPT-5.5-Cyber has not gone unnoticed by governmental bodies and international organizations. OpenAI proactively engaged with several US government entities, including the White House and the Commerce Department’s AI standards teams, prior to the model’s release. This engagement highlights a growing recognition among policymakers of the dual-use nature of powerful AI technologies—their immense potential for good, alongside the inherent risks of misuse.

Practical Explanations: The Dual-Use Dilemma

The concept of dual-use technology is central to the discussions surrounding AI regulation. Just as nuclear technology can be used for energy generation or weapons, advanced AI can be deployed for defense or offense. GPT-5.5-Cyber, with its ability to identify vulnerabilities, could theoretically be repurposed to exploit them. This concern is not merely theoretical; instances of AI being used for malicious purposes, such as generating convincing phishing emails or automating attack vectors, are already emerging.

This necessitates a careful regulatory approach. Governments are grappling with how to foster innovation while simultaneously establishing safeguards to prevent catastrophic misuse. The discussions often revolve around:

- Ethical AI Development: Ensuring that AI models are developed with ethical considerations embedded from inception, focusing on transparency, accountability, and fairness.

- International Cooperation: Establishing global norms and agreements to prevent an AI arms race and ensure responsible deployment across borders.

- Policy Frameworks: Developing robust legal and policy frameworks that can adapt to the rapid pace of AI advancement, balancing security needs with civil liberties.

Industry Insights: The IMF's Warning

The International Monetary Fund (IMF) has issued stark warnings regarding the potential for advanced AI systems to “destabilize” the world economy if misused [3]. This concern stems from the interconnectedness of global financial systems and critical infrastructure, all of which are increasingly reliant on digital technologies. A widespread cyberattack facilitated by advanced AI could have cascading effects, disrupting markets, supply chains, and essential services on an unprecedented scale. The IMF's caution serves as a powerful reminder of the high stakes involved in the responsible development and deployment of AI in sensitive domains.

Balancing Speed and Safety: OpenAI's Commitment

OpenAI’s national security lead, Katrina Mulligan, succinctly articulated the core challenge: “There is constant tension between moving fast and staying responsible.” This statement encapsulates the dilemma faced by all leading AI developers. The imperative to innovate rapidly and deliver groundbreaking solutions must be tempered by an unwavering commitment to safety, security, and ethical considerations.

Experience-Based Insights: Lessons from Anthropic

Industry rivals like Anthropic, known for their Claude Mythos models, have adopted a strategy of “staged releases.” This approach involves releasing less powerful versions of their AI models to a broader audience first, allowing defenders to gain a head start in understanding and countering potential threats before more advanced versions are deployed. This philosophy is rooted in the belief that proactive defense requires time for adaptation and learning. OpenAI’s own “Trusted Access for Cyber program” and enhanced account security requirements for GPT-5.5-Cyber participants reflect a similar cautious approach, emphasizing controlled access and continuous monitoring.

The Path Forward: A Collaborative Ecosystem

The future of AI in cybersecurity will likely depend on a collaborative ecosystem involving AI developers, cybersecurity experts, government agencies, and international bodies. This collaboration is essential for:

- Shared Threat Intelligence: Rapidly sharing information about new threats and vulnerabilities to enable collective defense.

- Standardization and Best Practices: Developing industry-wide standards for secure AI development and deployment.

- Education and Training: Equipping cybersecurity professionals with the skills to effectively utilize AI tools and understand their limitations.

Conclusion: A Secure Digital Future with Responsible AI

GPT-5.5-Cyber represents a significant leap forward in leveraging AI for cyber defense. Its ability to rapidly identify vulnerabilities and analyze malware offers a powerful new layer of protection for critical infrastructure. However, its introduction also underscores the profound responsibility that comes with developing such potent technologies. The ongoing dialogue between innovation and regulation, the careful balance between speed and safety, and the collaborative efforts of diverse stakeholders will ultimately determine the success of AI in securing our digital future. As AI continues to evolve, our collective commitment to responsible development and deployment will be the bedrock upon which a safer, more resilient cyber landscape is built.

References

[1] Cybersecurity and Infrastructure Security Agency (CISA). (2023).

Annual Threat Report.

https://www.cisa.gov/

[2] IBM Security. (2023).

Cost of a Data Breach Report.

https://www.ibm.com/security/data-breach

[3] International Monetary Fund (IMF). (2023).

AI and the Future of the Global Economy.

https://www.imf.org/