AI Governance: Securing Profit Margins in the Age of Autonomous AI

The rapid evolution of Artificial Intelligence, particularly the rise of autonomous AI systems, presents both unprecedented opportunities and significant challenges for enterprises. While the promise of enhanced efficiency and innovation is compelling, the operational complexities and potential risks associated with deploying these advanced systems cannot be overstated. As organizations increasingly integrate AI into their core business processes, the need for stringent AI governance becomes paramount. This article delves into the critical role of AI governance in securing profit margins, drawing insights from industry leaders like SAP, and explores the foundational elements required for successful, risk-mitigated AI adoption.

The Imperative of Precision: Beyond Statistical Guesses

In the realm of enterprise operations, the difference between near-perfect and absolute precision is not merely incremental; it is existential. Manos Raptopoulos, Global President of Customer Success Europe, APAC, Middle East & Africa at SAP, succinctly captures this sentiment, stating, "The distance between 90% and 100% accuracy is not incremental. In our world, it is existential." This perspective underscores a fundamental shift in how businesses must approach AI. Consumer-grade models, while impressive in their capabilities, often fall short of the deterministic control required for critical business functions. For instance, a slight inaccuracy in inventory management or financial forecasting, even if seemingly minor, can lead to substantial profit erosion or compliance breaches.

As large language models (LLMs) and other AI systems move from experimental phases to production environments, the evaluation criteria have rigorously evolved. The focus is no longer solely on novelty or potential, but on precision, governance, scalability, and tangible business impact. Corporate boards are now grappling with the transition of AI from passive analytical tools to active digital actors capable of autonomous decision-making and execution. This transition, which Raptopoulos terms the "primary governance moment," necessitates a re-evaluation of existing operational frameworks and risk management strategies.

Navigating the Risks of Agent Sprawl

Autonomous AI agents, with their capacity to plan, reason, orchestrate, and execute workflows independently, introduce a new layer of operational risk. These systems interact directly with sensitive data and can influence decisions at scale, making their governance as critical as that of human workforces. The absence of robust governance exposes organizations to severe operational vulnerabilities, akin to the "shadow IT" crises of the past, but with potentially far greater consequences. Imagine an AI agent autonomously managing supply chain logistics; a misinterpretation of data or an unmonitored deviation from policy could lead to significant disruptions, financial losses, and reputational damage.

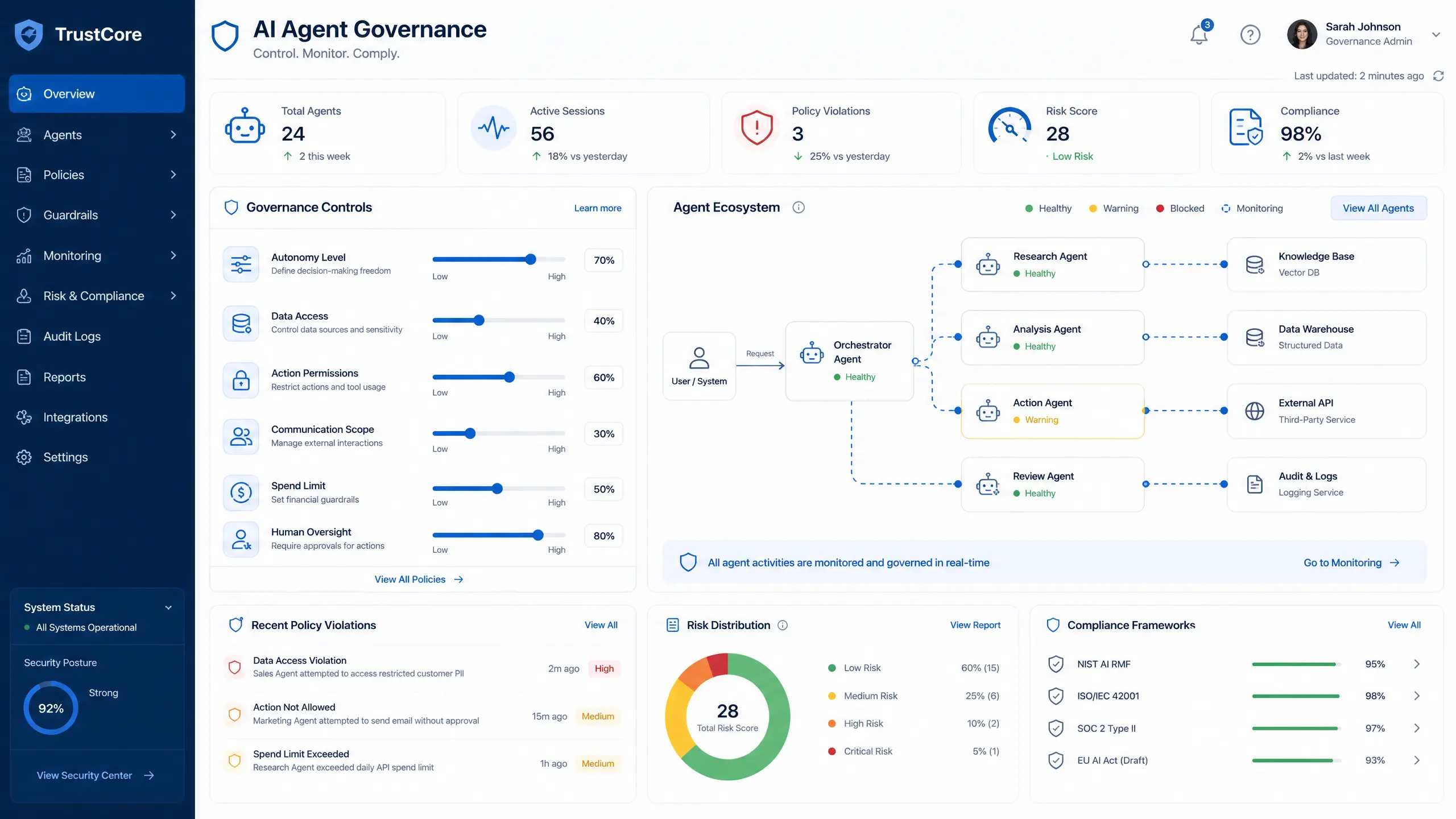

To counteract this, a comprehensive framework for agent lifecycle management is essential. This includes:

- Defining Autonomy Boundaries: Clearly delineating the scope of an AI agent's decision-making authority and intervention points.

- Enforcing Policy: Integrating business rules and compliance mandates directly into the AI's operational logic.

- Continuous Performance Monitoring: Implementing real-time oversight to detect anomalies, biases, or deviations from expected behavior.

- Establishing Audit Trails: Ensuring every AI-driven decision and action is traceable and accountable.

These measures transform governance from a mere compliance checklist into a hard engineering constraint, demanding significant investment in infrastructure and expertise. The integration of modern vector databases with legacy relational architectures, for example, requires substantial engineering capital. Teams must meticulously restrict the AI's inference loop to prevent hallucinations—instances where the AI generates plausible but incorrect information—from corrupting critical financial or supply chain execution paths. While these strict parameters may increase computational latency and hyperscaler costs, the long-term benefits of mitigated risk and enhanced reliability far outweigh the initial investments.

Resolving Baseline Issues for Agentic Model Deployment

Before deploying agentic models, corporate boards must address three fundamental questions to ensure responsible and effective AI integration:

- Accountability for Errors: Who is ultimately responsible when an AI agent makes an error? Establishing clear lines of accountability is crucial for legal, ethical, and operational integrity.

- Audit Trails for Machine Decisions: How can machine decisions be transparently audited? Comprehensive logging and explainable AI (XAI) techniques are vital for understanding the rationale behind AI actions.

- Thresholds for Human Escalation: At what point should human intervention be triggered? Defining precise thresholds ensures that complex or sensitive situations are escalated appropriately.

These questions are further complicated by geopolitical fragmentation, with sovereign cloud infrastructures, AI models, and data localization mandates becoming regulatory realities across major global markets. Enterprises must embed deterministic control directly into probabilistic intelligence, viewing this not as an IT project, but as a C-suite mandate. This strategic imperative ensures that AI deployments align with broader organizational goals and regulatory landscapes.

Structuring Relational Intelligence for Commercial Operations

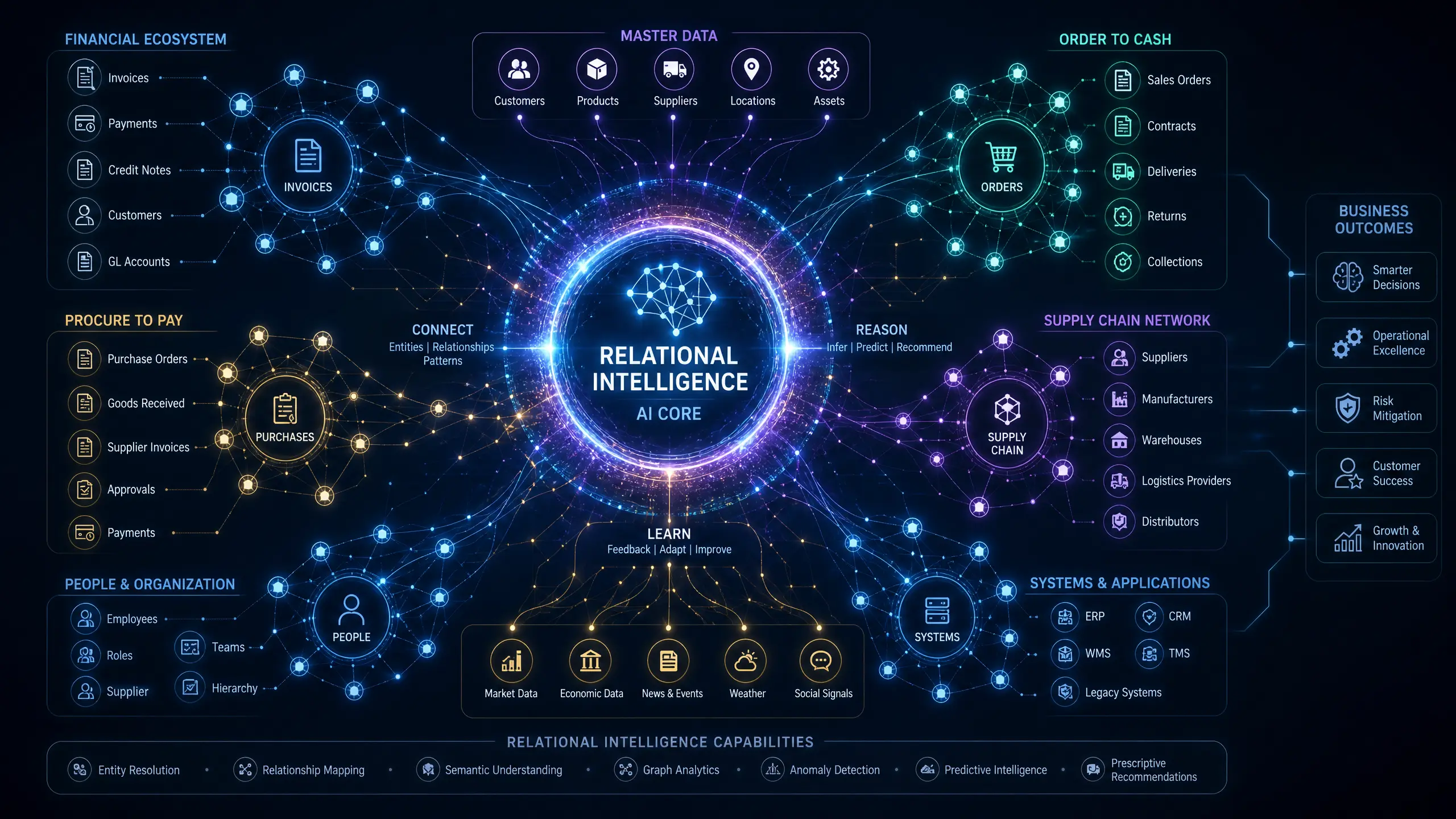

The efficacy of any AI system is intrinsically linked to the quality of the data and processes it operates upon. This is what Raptopoulos refers to as the "data foundation moment." Fragmented master data, siloed business systems, and overly customized Enterprise Resource Planning (ERP) environments introduce dangerous unpredictability, making AI recommendations unreliable. For instance, if an autonomous agent relies on disparate data sources to inform a critical decision regarding cash flow or customer relations, the resulting operational damage can scale instantly and severely.

To extract tangible enterprise value, organizations must move beyond generic LLMs trained on internet-scale text. True enterprise intelligence must be grounded in proprietary corporate data, encompassing orders, invoices, supply chain records, and financial postings directly embedded into business processes. Relational foundation models, specifically optimized for structured business data, consistently outperform generic models in critical areas such as forecasting, anomaly detection, and operational optimization. This specialized approach ensures that AI insights are contextually relevant and actionable within the enterprise ecosystem.

The operational friction of making a highly customized ERP environment intelligible to a foundation model often impedes deployment. Data engineering teams frequently spend excessive cycles sanitizing fragmented master data just to establish a baseline for AI ingestion. When a relational model needs to accurately interpret complex, proprietary supply chain records alongside raw invoice data, the underlying data pipelines must operate with zero latency. Any failure in data ingestion can instantly degrade the model's predictive capabilities, rendering the AI agent functionally dangerous.

Integrating legacy architecture with modern relational AI necessitates a significant overhaul of deeply entrenched data pipelines. Engineering teams face the daunting task of indexing decades of poorly classified planning data to enable embedding models to generate accurate vector representations. Corporate boards must critically evaluate whether their current data estate is genuinely prepared for AI integration, rather than merely layering probabilistic intelligence over disjointed foundations. This readiness is a prerequisite for achieving meaningful AI-driven transformation.

Designing Intent-Based Interfaces: The Employee Interaction Moment

The paradigm of enterprise application interaction is shifting from static interfaces to generative user experiences, marking what Raptopoulos identifies as the "employee interaction moment." Instead of navigating complex software ecosystems manually, employees will express their intent directly to the system. Consider an employee instructing the software to prepare a briefing for a high-revenue customer visit. AI agents would then orchestrate the necessary workflows, assemble relevant context, and surface recommended actions, significantly streamlining operations.

However, the adoption of these digital teammates by the workforce is contingent upon trust. Employees will only embrace AI systems when they are confident that the system's outputs adhere to established governance boundaries, reflect authentic business rules, and deliver demonstrable productivity gains. This trust is built through consistent performance, transparency, and reliability.

Engineering these systems requires the development of role-specific AI personas tailored for positions such as the CFO, CHRO, or head of supply chain. These personas must be built upon trusted data and seamlessly embedded within familiar corporate workflows to successfully bridge the adoption gap. Achieving this level of integration is a critical design decision with far-reaching consequences. Organizations that invest in AI-native architectures are likely to see accelerated returns on investment, while those attempting to bolt probabilistic models onto legacy interfaces will struggle with trust, usability, and scalability.

Technology leaders who try to force modern AI orchestration onto monolithic software applications often encounter severe integration delays. The routing of probabilistic API calls through outdated enterprise middleware can cause user interfaces to lag, undermining the intent-based workflow. Designing effective role-specific personas demands more than just prompt engineering; it requires mapping complex access controls, permissions, and business logic into the AI model's active memory, ensuring that the AI operates within the defined organizational context.

Engineering Competitive Defense Through Customer Interactions

The financial returns on AI investments often materialize most rapidly during customer interactions. Training models on proprietary records, internal rules, and historical logs creates a unique layer of customer-specific intelligence that is difficult for competitors to replicate. This strategic advantage is particularly potent in exception-heavy workflows such as dispute resolution, claims processing, returns management, and service routing.

Deploying autonomous agents capable of classifying cases, surfacing relevant documentation, and recommending policy-aligned resolutions transforms these high-cost processes into distinct competitive differentiators. These models continuously adapt based on the outcomes of each interaction, leading to ongoing improvements in service quality and efficiency. Corporate buyers prioritize reliable, relevant, and responsive service over technological gimmicks. Companies that strategically deploy AI to manage heavy workloads, while maintaining strict oversight of the final outputs, construct formidable barriers to entry that generic tools simply cannot penetrate.

Achieving comprehensive corporate intelligence requires the C-suite to orchestrate three distinct layers in parallel, a process Raptopoulos defines as the "strategy moment":

- Embedded Functionality: Integrating persona-driven productivity gains directly into core applications for immediate returns.

- Agentic Orchestration: Facilitating multi-agent coordination across cross-system workflows to enhance operational fluidity.

- Industry-Specific Intelligence: Developing deeply specialized applications to address high-value challenges unique to a particular sector.

A common pitfall for leaders is falling victim to false sequencing. Focusing solely on embedded tools can leave significant financial value uncaptured, while aggressively pursuing deep industry applications without first establishing proper governance and data maturity can multiply corporate risk. Scaling these models effectively requires aligning corporate ambition with actual technical readiness. Leadership teams must commit to funding clean core architectures, updating data pipelines, and enforcing cross-functional ownership to move beyond pilot phases. The most profitable deployments treat AI as a central operating layer, demanding the same rigorous governance as human staff.

Conclusion:

The journey towards fully realizing the potential of enterprise AI is complex, but the path is clear: robust AI governance is not merely a regulatory burden but a strategic imperative for securing profit margins and fostering sustainable growth. By prioritizing precision, establishing clear accountability, investing in data foundation, designing intent-based interfaces, and leveraging AI for competitive differentiation, organizations can navigate the complexities of autonomous AI. The governance decisions made today will ultimately determine whether AI becomes a powerful source of durable advantage or an expensive lesson in technological oversight. The future of enterprise AI hinges on a commitment to disciplined, strategic, and well-governed implementation.

Author: Malik AI Team

Date: 2026-05-04

References:

[1] SAP. (n.d.).

SAP: How enterprise AI governance secures profit margins. Artificial Intelligence News.

https://www.artificialintelligence-news.com/news/sap-how-enterprise-ai-governance-secures-profit-margins/