AI Runtime OS

As artificial intelligence systems grow in complexity and autonomy, a new foundational layer is rapidly emerging: the AI runtime layer. In April 2026, this concept is no longer theoretical but a critical component of advanced AI infrastructure, effectively acting as an operating system for AI. This shift signifies a maturation of the AI ecosystem, moving beyond individual model performance to comprehensive system orchestration and management.

Historically, AI development focused primarily on model architecture and training. However, as AI applications become more sophisticated and integrated into enterprise workflows, the need for robust management of these models in production has become paramount. The AI runtime layer addresses this by providing a unified framework for managing the entire lifecycle of AI operations, from deployment to execution and optimization.

The Evolution of AI Infrastructure

The journey of AI infrastructure has seen several stages. Initially, it was about raw computational power and data storage. Then came the era of MLOps, focusing on streamlining the machine learning pipeline. Now, in 2026, we are witnessing the rise of a meta-layer that sits above individual models and MLOps tools, orchestrating their interactions and optimizing their performance in real-time.

This runtime layer is essential because modern AI systems are rarely monolithic. They often involve multiple models, agents, and external tools working in concert. Without a dedicated orchestration layer, managing these distributed components becomes a significant challenge, leading to inefficiencies, increased costs, and reliability issues.

Key Functions of an AI Runtime Layer

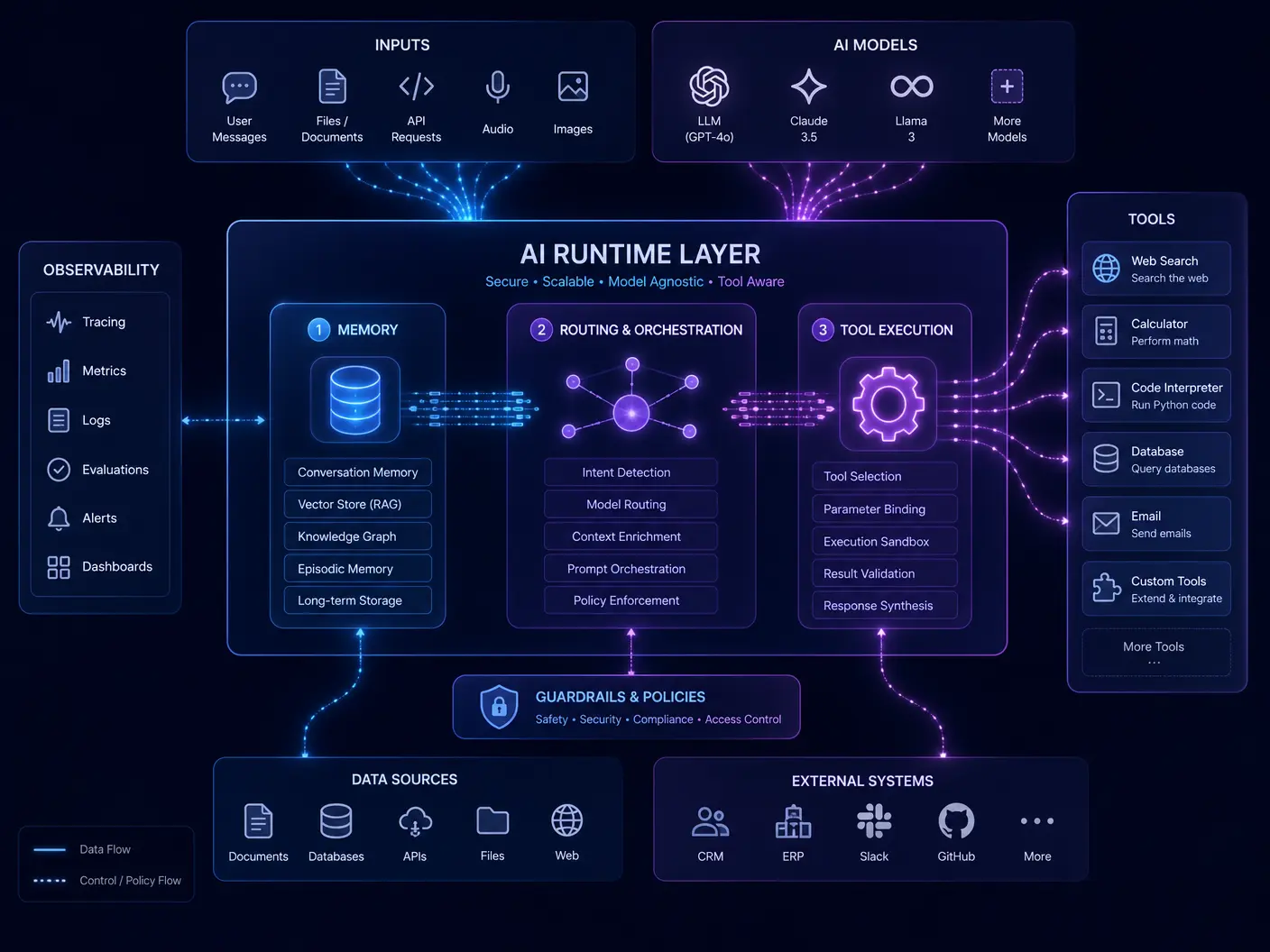

An effective AI runtime layer performs a multitude of critical functions, transforming how AI applications are built and deployed:

- Memory Management: Efficiently handles the context window and KV cache for large language models, ensuring long-term memory and reducing computational overhead.

- Routing and Model Switching: Dynamically selects the most appropriate model for a given task, optimizing for cost, latency, and accuracy. This allows for specialized models to be used for specific sub-tasks.

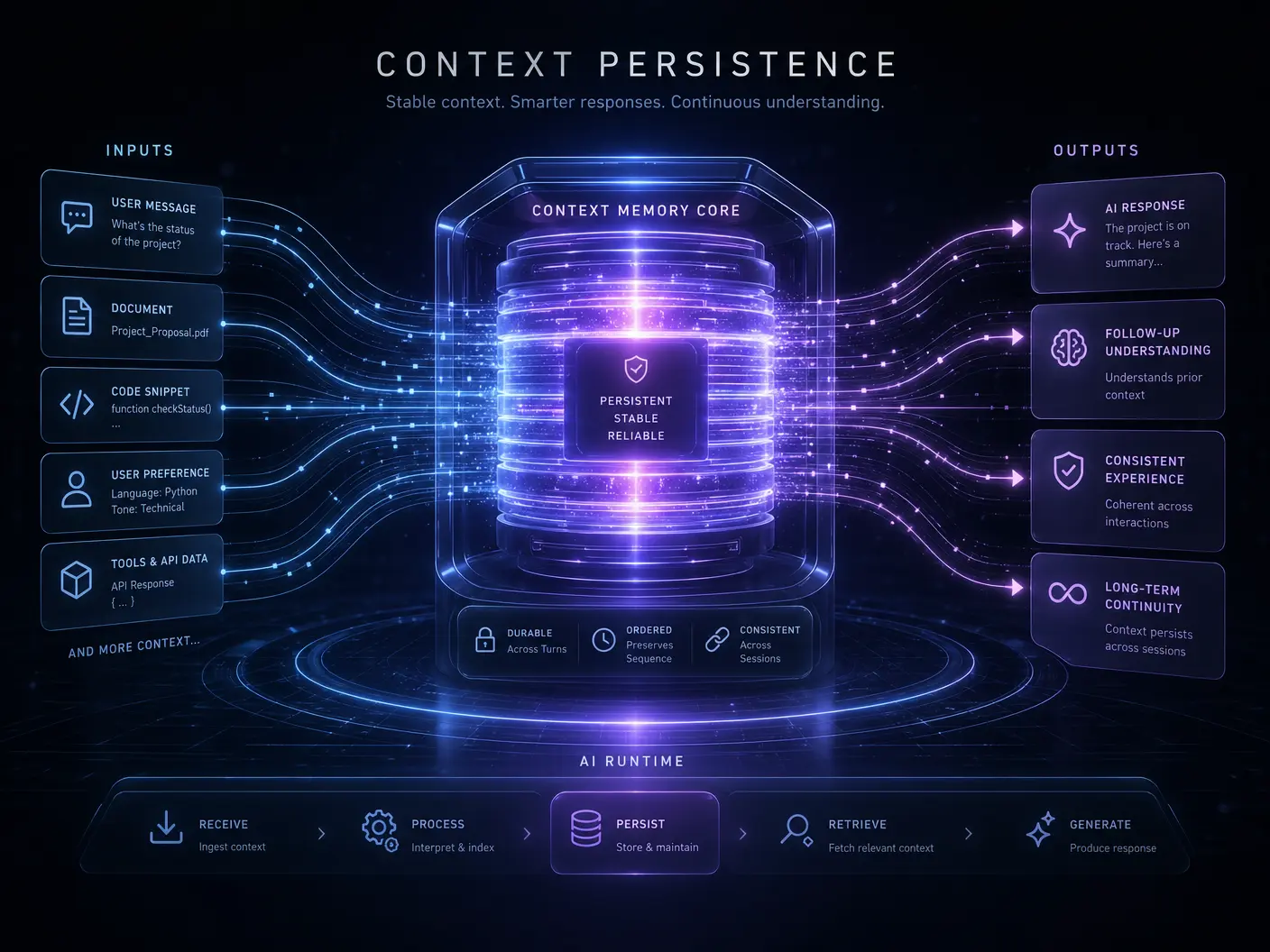

- Context Persistence: Maintains conversational state and historical interactions across multiple turns and sessions, providing a seamless user experience.

- Cost Optimization: Monitors resource utilization and intelligently scales AI workloads, minimizing operational expenses.

- Tool Execution: Manages the invocation and integration of external tools and APIs, enabling AI agents to interact with the real world.

- Observability and Monitoring: Provides real-time insights into AI system performance, identifies bottlenecks, and flags potential issues.

This comprehensive management capability allows organizations to deploy and scale complex AI solutions with greater confidence and efficiency. The runtime layer acts as the central nervous system, coordinating all components to achieve optimal outcomes.

Impact on Enterprise AI Adoption

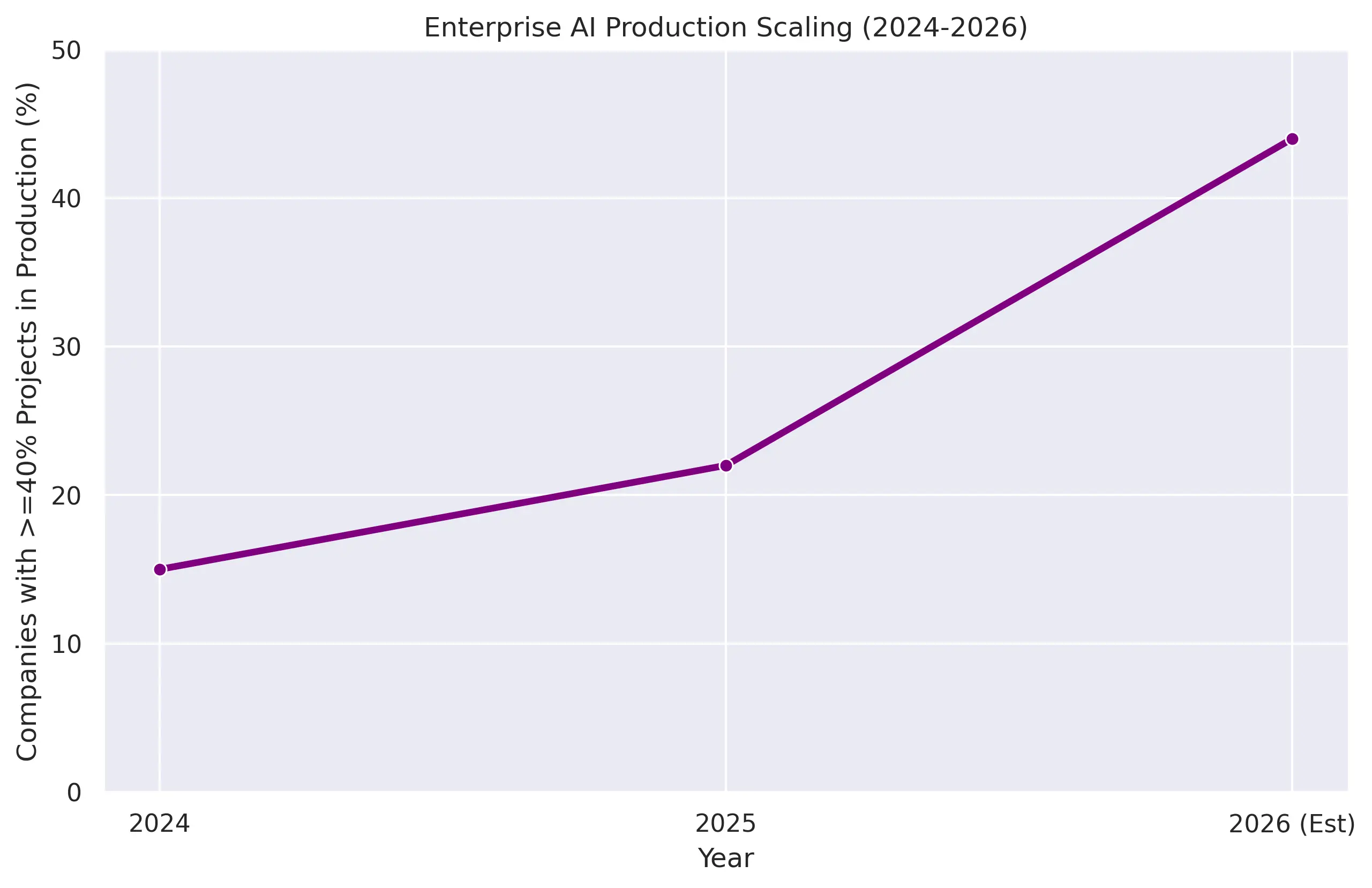

The emergence of AI runtime layers is directly contributing to the accelerated adoption of AI within enterprises. As AI systems become more manageable and reliable, businesses are more willing to invest in and deploy them at scale. Deloitte's 2026 AI report highlights this trend, noting that the number of companies with 40% or more AI projects in production is set to double [5]. This indicates a clear shift from experimental pilot projects to widespread operational integration.

Furthermore, the increased accessibility and manageability provided by runtime layers are empowering a broader range of employees to leverage AI. Worker access to AI tools surged by 50% in 2025, demonstrating a growing democratization of AI capabilities across organizations [4]. This trend is expected to continue as runtime layers simplify the deployment and interaction with sophisticated AI models.

The Future of AI Orchestration

The development of AI runtime layers is still in its early stages, but its trajectory is clear. We can expect these systems to become even more sophisticated, incorporating advanced features such as self-healing capabilities, proactive anomaly detection, and more intelligent resource allocation. The goal is to create AI systems that are not only powerful but also resilient, adaptable, and cost-effective.

The competitive landscape in AI infrastructure is heating up, with major players and innovative startups vying to define the standards for AI runtime. The focus will increasingly be on interoperability, security, and the ability to seamlessly integrate with existing enterprise IT ecosystems. The ultimate vision is an AI infrastructure that is as robust and invisible as modern cloud computing platforms.

Conclusion

The AI runtime layer is rapidly becoming the indispensable operating system for artificial intelligence. By providing comprehensive management, orchestration, and optimization capabilities, it is enabling the widespread adoption and scaling of complex AI systems across industries. As we move further into 2026, the evolution of these runtime layers will be crucial in unlocking the full potential of autonomous AI, transforming how businesses operate and innovate. The future of AI is not just about smarter models, but about smarter ways to manage and deploy them.

References

[1] Vishal Mysore. "The Biggest AI Trends and Tools Emerging in April 2026." Medium, April 2026.

[2] NVIDIA Blog. "How AI Is Driving Revenue, Cutting Costs and Boosting Productivity." March 2026.

[3] National University. "131 AI Statistics and Trends for 2026." March 2025.

[4] Deloitte US. "The State of AI in the Enterprise - 2026 AI report." 2026.

[5] Infotech. "AI Trends 2026." 2026.