- Malik Logix

- Posts

- GitHub Copilot Token Charges

GitHub Copilot Token Charges

Explore the implications of GitHub Copilot's transition to per-token AI charges and its potential impact on developers and enterprises.

Malik Farooq

May 6, 2026

Deep Dive

Per-Token AI Charges Come to GitHub Copilot: A Shift in the AI Economy

The economic model underpinning artificial intelligence services is undergoing a significant transformation. Historically, many AI tools, including popular coding assistants like GitHub Copilot, have operated on a flat-rate subscription basis. However, a notable shift is underway, with GitHub Copilot moving to a per-token charging model starting June 1, 2026. This change aligns its pricing with the API charges common among large language models (LLMs) and reflects a broader industry trend. This article delves into the details of this new pricing structure, its implications for developers and enterprises, and how it mirrors the evolving economics of AI.

From Flat Rate to Per-Token: Understanding the Change

Previously, GitHub Copilot offered a straightforward subscription model where users received a set number of 'Premium Requests.' A complex coding task or a trivial query each counted as a single request. This model, while simple, did not always reflect the actual computational resources consumed by the underlying LLMs.

The new per-token pricing scheme will measure most requests based on the tokens used by, input to, and output from the LLM at the heart of Copilot. This means that the cost will directly correlate with the complexity and length of the code or queries processed.

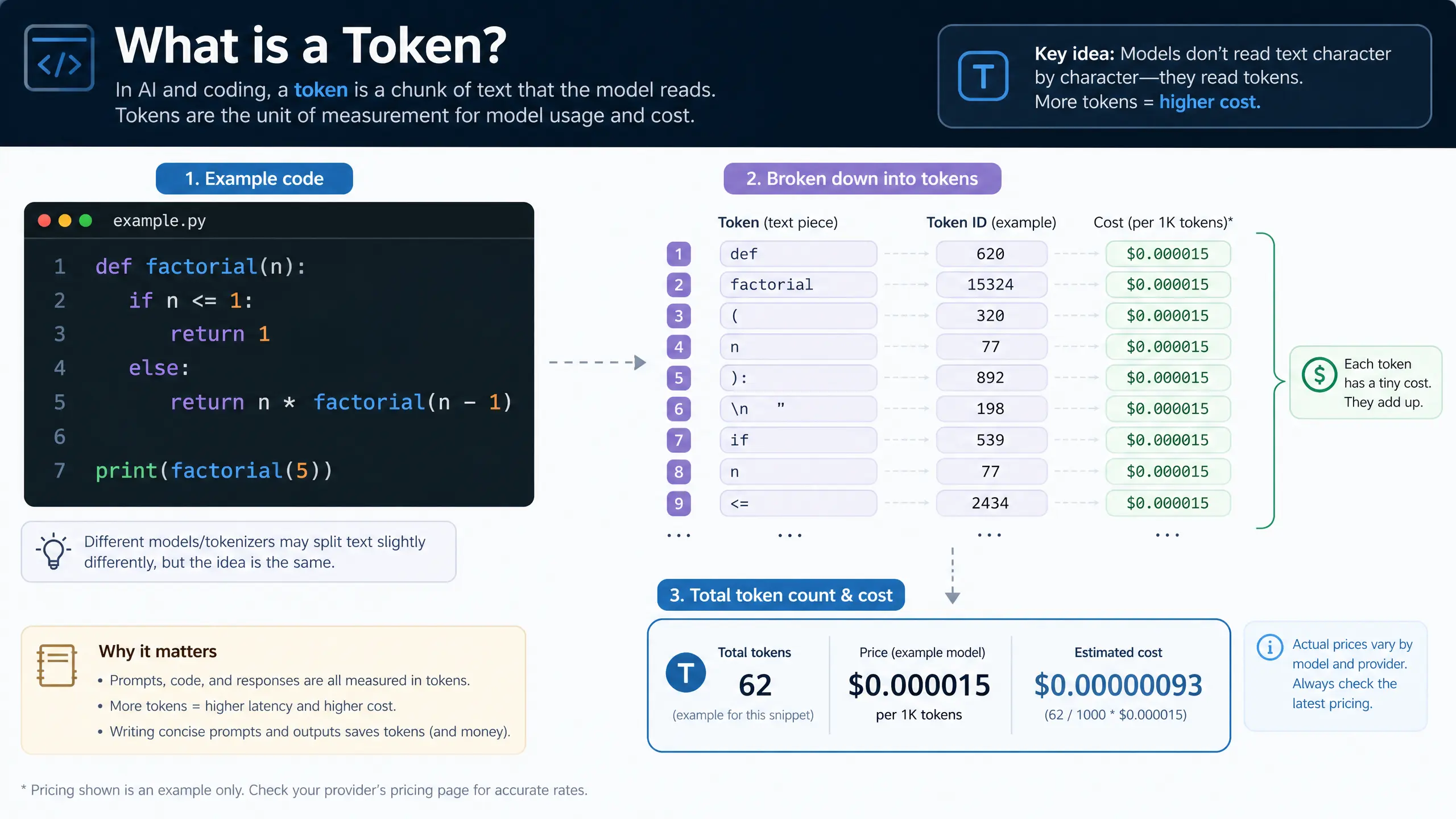

What are Tokens and How are They Measured?

A token is generally understood to represent approximately three-quarters of a word. Therefore, a text of 10,000 words would equate to roughly 12,000-13,000 tokens. In the context of development, if Copilot examines a body of code comprising 10,000 'words' (expressions, statements, variable names, functions), a single query would consume 12,000-13,000 tokens from a user's monthly allotment. Both prompt text (inputs) and Copilot's outputs will contribute to the token count.

AI Credits and Pricing Tiers

The pricing tiers for GitHub Copilot will remain at their current levels, but instead of a fixed number of queries, users will receive 'AI Credits' of equivalent value. For instance, a base-tier Copilot Pro subscriber ($10 per month) will receive 1,000 credits, with GitHub stating that one AI Credit is currently worth one US cent. The number of tokens each credit buys will vary depending on factors such as the model used, the input/output mix, the cache size (data held in the LLM's memory for context), and the specific feature requested. Simple queries will consume fewer tokens, while multi-agent queries involving complex, lengthy codebases will deplete AI Credit accounts more quickly. Furthermore, queries to advanced frontier models will incur higher costs.

It is important to note that code completions (similar to a phone's auto-complete function) and Next Edit suggestions will remain free, offering some compensatory benefits to users.

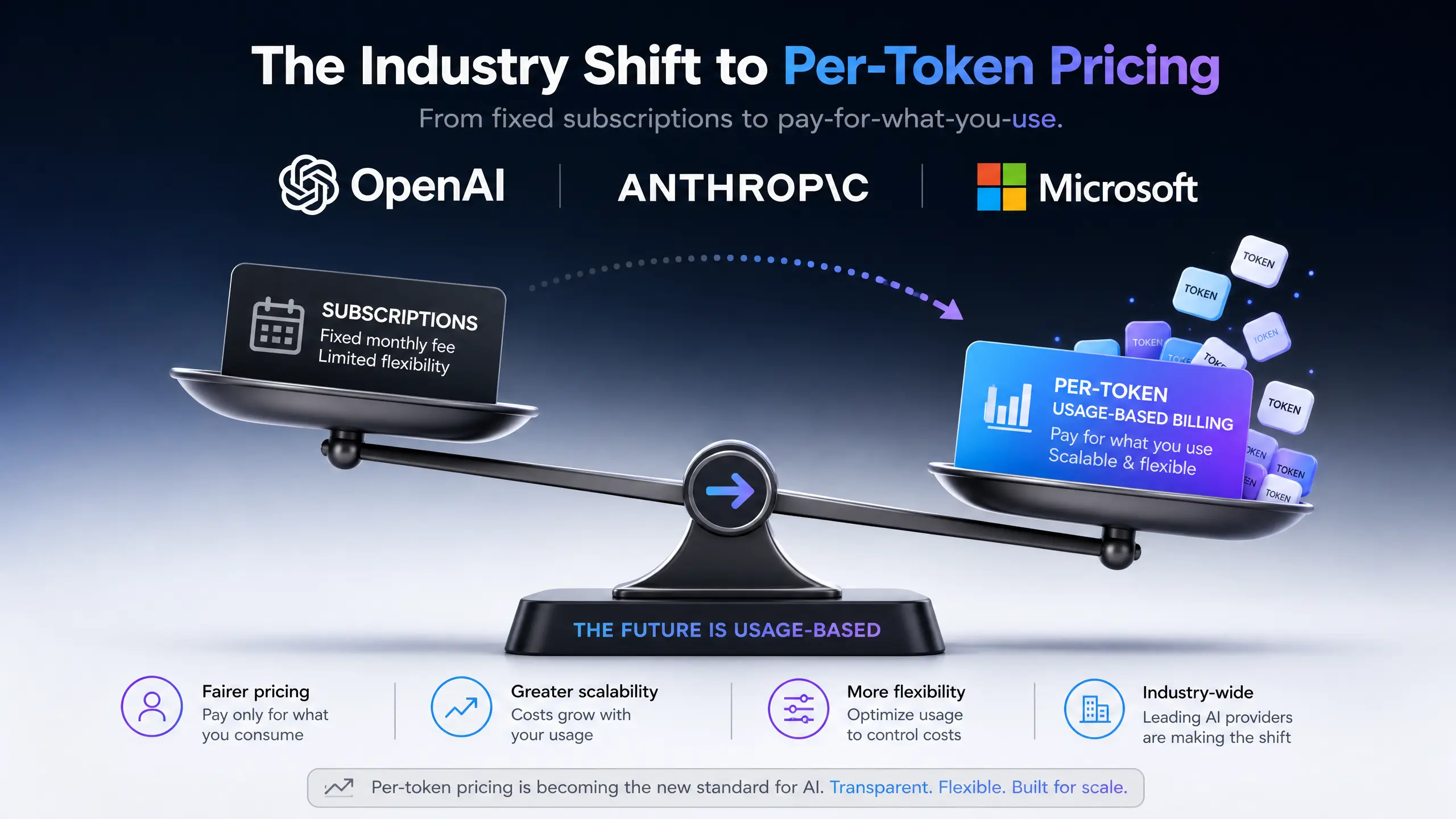

Industry-Wide Shift to Per-Token Pricing

GitHub's pricing changes are not isolated; they reflect a broader industry trend. Companies like Anthropic and OpenAI have already transitioned their enterprise customers to token-based billing. While Microsoft, GitHub's owner, has historically subsidized Copilot through revenues from its other divisions, this shift indicates a move towards a more economically sensible model from Microsoft's perspective.

Impact on Developers and Enterprises

This new billing model immediately forces both new and existing users to become more aware of their token spend per query, a metric previously abstracted away by flat-rate subscriptions. While it may make economic sense for providers, it could potentially discourage the exploration and testing that new users often undertake.

For businesses deploying AI coding agents in their development teams, the cost implications of this industry-wide shift are significant. For example, Uber's CTO reported that the company had already spent its entire 2026 AI budget, with 11% of its code updates now written by AI, primarily using Anthropic's Claude coding agents. This highlights how quickly costs can escalate under a per-token model.

Beyond the IT department, companies utilizing AI automation should anticipate that complex tasks, especially those involving unsupervised agentic LLMs for extended periods, could also transition to a similar per-token basis. Consequently, the efficiency gains delivered by AI in the workforce will need to be carefully weighed against potential increases in AI vendor bills.

Conclusion: Navigating the New AI Economy

GitHub Copilot's move to per-token AI charges marks a pivotal moment in the AI economy. This shift, driven by the need for more accurate resource allocation and alignment with LLM API pricing, will undoubtedly influence how developers and enterprises interact with and budget for AI tools. While it introduces a new layer of cost awareness, it also underscores the growing maturity of the AI industry. Organizations must adapt by carefully monitoring token usage, optimizing their AI workflows, and strategically evaluating the cost-benefit of advanced AI capabilities to ensure continued innovation and profitability in this evolving landscape.

Real-World Examples and Industry Insights

- Cost Optimization: Developers will need to optimize their prompts and code structures to minimize token usage, fostering more efficient interaction with AI coding assistants.

- Budgeting for AI: Enterprises will require more granular budgeting for AI tools, moving from fixed subscriptions to variable costs based on actual usage.

- Competitive Landscape: This pricing model could influence the competitive landscape, potentially favoring AI providers that offer more cost-effective token usage or alternative billing structures.

Key Statistics

- June 1, 2026: Effective date for GitHub Copilot's per-token charging model.

- 1 AI Credit = 1 US Cent: The current conversion rate for AI Credits.

- Uber's AI Spend: Uber's CTO reported spending their entire 2026 AI budget early, with 11% of code updates written by AI.

Ready to master AI?

Join 1,000+ professionals getting the edge in AI marketing. 3 minutes a day to 10x your growth.

Join Free NowKeep reading

Agentic AI Governance Enterprise Readiness

Explore Google's innovative agentic AI governance platform and assess whether enterprises are truly prepared for the shift towards autonomous AI systems.

Malik Farooq/

AI Agents Interaction Infrastructure

Explore why AI agents require robust interaction infrastructure to prevent automation waste and ensure efficient, secure, and compliant operations in enterprise environments.

Malik Farooq/

AI Platform Bob: Revolutionizing SDLC Cost Regulation

Discover how IBM's AI platform Bob is transforming software development lifecycle (SDLC) governance and cost regulation for enterprises.

Malik Farooq/

Back to archive

Share