CoreWeave: Powering the AI Revolution Through Scalable GPU Cloud Infrastructure

CoreWeave: Powering the AI Revolution Through Scalable GPU Cloud Infrastructure

Status

Success

Problem Solved

The exponential growth in AI, machine learning, and graphics-intensive workloads has created massive demand for GPU-based compute resources. Traditional cloud providers often lack flexible, cost-effective access to large-scale GPU infrastructure, causing bottlenecks for AI startups, animation studios, and enterprises requiring high-performance computing.

CoreWeave addresses this gap by providing a specialized, scalable GPU cloud platform optimized for AI training, inference, and visual effects rendering. Their solution democratizes access to cutting-edge GPU resources with tailored infrastructure, enabling faster experimentation and deployment.

Why it Succeeded

CoreWeave succeeded by focusing exclusively on GPUs, unlike major cloud providers with broad but less specialized offerings. This laser focus allowed them to optimize performance and pricing for GPU-centric workloads, attracting AI developers, studios, and enterprises seeking flexible and cost-efficient alternatives.

Additionally, early strategic partnerships with AI leaders (like OpenAI) helped CoreWeave rapidly scale and validate their platform. Their ability to innovate operationally and offer customizable solutions contributed to robust customer retention and expansion.

Funding and Evaluation

- Total Funding: Over $150 million in venture capital

- Peak Valuation: Estimated around $1 billion (unicorn status)

- Including Craft Ventures, Coatue Management, and other prominent technology-focused investors

How it Works

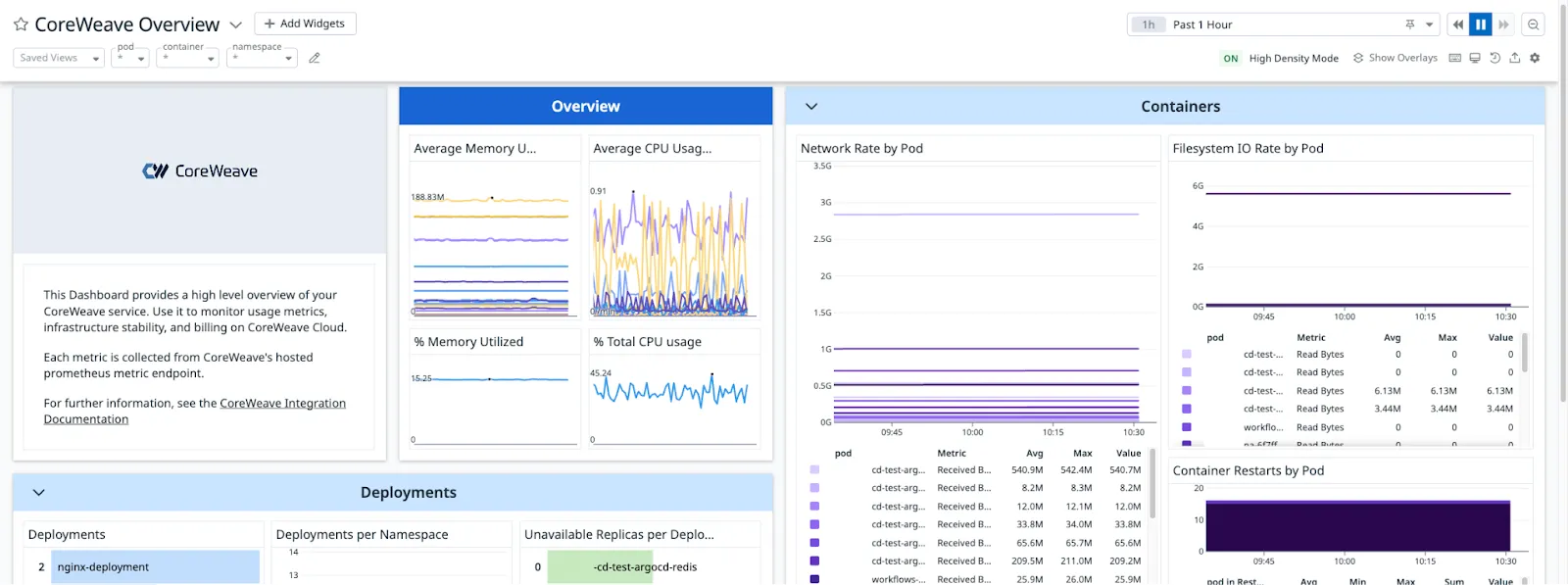

CoreWeave operates a cloud infrastructure platform built around high-density GPU clusters. The architecture integrates modern container orchestration with custom scheduling optimized for diverse AI and graphics workloads. Key technical components include:

- Flexible GPU Provisioning: Users can request and scale GPU instances on demand, selecting from various GPU types (NVIDIA A100, V100, etc.) tailored to workload requirements.

- Kubernetes-based Orchestration: To enable seamless deployment and scalability of AI pipelines and rendering workloads.

Their platform exposes APIs and SDKs enabling developers to integrate GPU compute seamlessly into their workflows.

Perspective

CoreWeave’s targeted specialization on GPU infrastructure differentiates it in the highly competitive cloud market dominated by hyperscalers. By aligning closely with evolving AI compute needs and maintaining agility, CoreWeave has positioned itself as a crucial enabler for innovation in AI and graphics.

Looking forward, the company faces challenges scaling sustainably while competing with increasingly GPU-focused offerings from established cloud giants. However, their deep expertise, customer relationships, and focused product roadmap position them well to capitalize on the growing demand for accessible high-performance GPU cloud resources.

In essence, CoreWeave exemplifies how nimble, domain-focused startups can carve out significant market share in infrastructure by solving specialized industry pain points effectively.

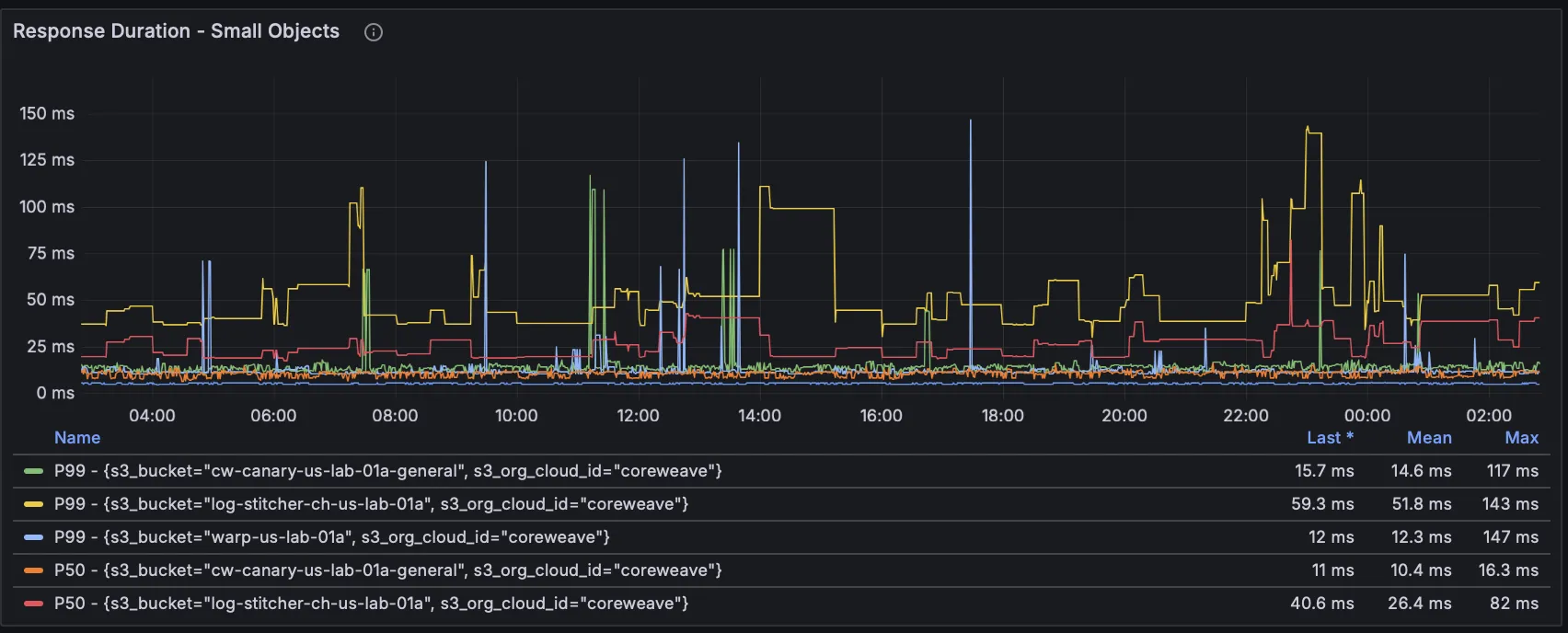

Visuals